- Towards AGI

- Posts

- You're Spending on AI. But Is Your Data Foundation Ready?

You're Spending on AI. But Is Your Data Foundation Ready?

Your AI program does not fail loudly.

Today, we’re diving into:

AI news: AI is shifting ops, security, infra & enterprise decisions fast

Hot Tea: Your data readiness, not AI tools- defines real business ROI

OpenAI: OpenAI scales enterprise AI via aggressive distribution strategy

Closed AI: Anthropic is closing the gap fast with an enterprise push & rapid growth

The way enterprises manage data, defend infrastructure, and scale AI has changed faster in the last eighteen months than in the previous decade. Four critical market shifts are rewriting the rules right now, and understanding them could determine how quickly your organization gains a competitive edge.

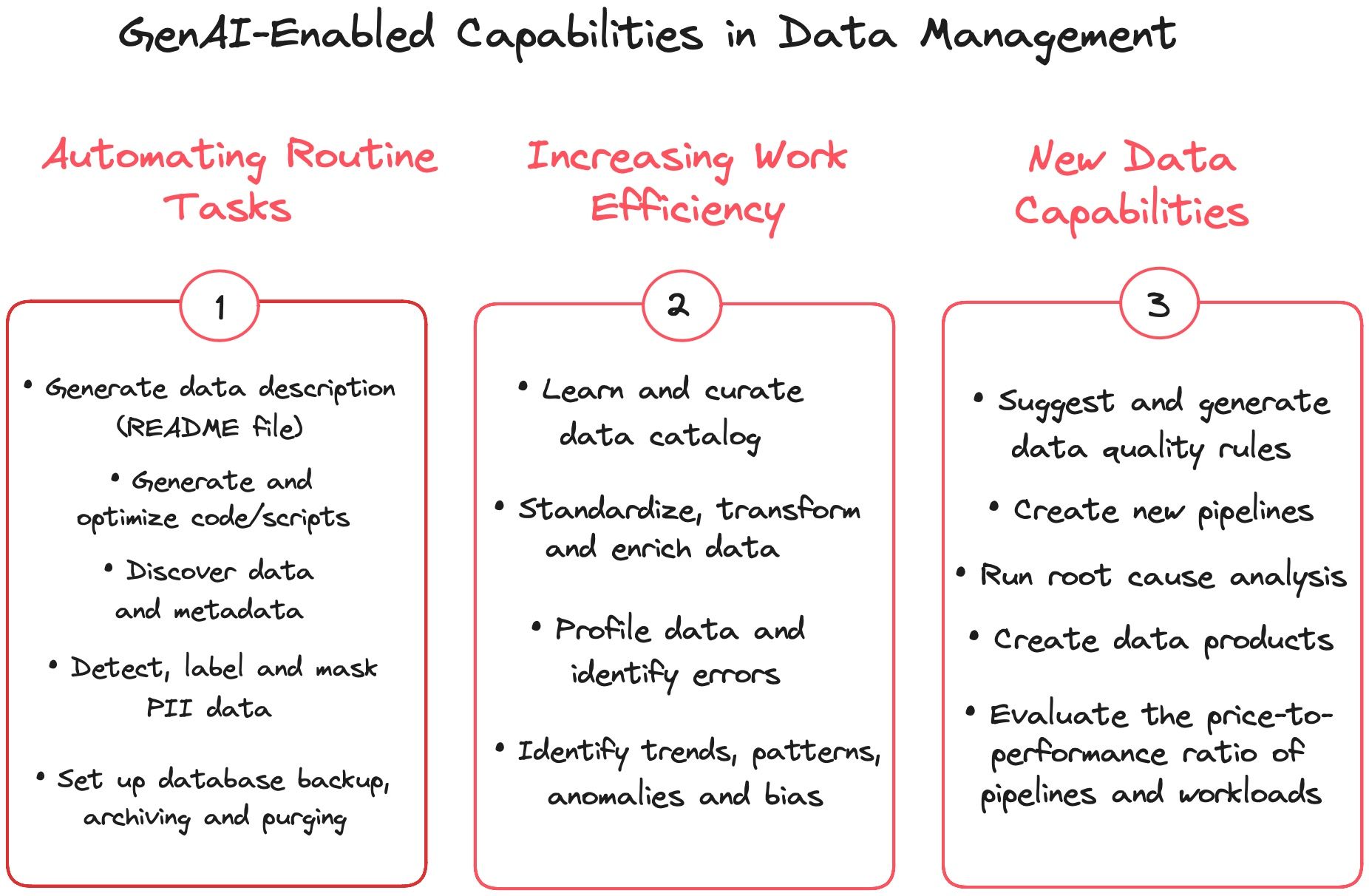

Gen AI is transforming how you store and manage data. Autonomous tools are changing how you test your security posture. The enterprise AI race is forcing faster decisions about platform adoption. And hidden infrastructure bottlenecks are quietly limiting how far your AI initiatives can actually go.

This strategic analysis breaks down each shift with real market context and practical insights. It is not about staying informed for its own sake. It is about helping you make smarter decisions faster, reduce operational risk, and position your business to lead in an AI-first environment.

Your Storage Team Is Working Too Hard For Too Little

If your storage team is still manually reviewing alerts, triaging performance issues, and making capacity decisions based on gut feel, you are already falling behind. Storage management has crossed a threshold. The shift from manual oversight to AI-driven intelligence is no longer a future possibility. It is happening right now.

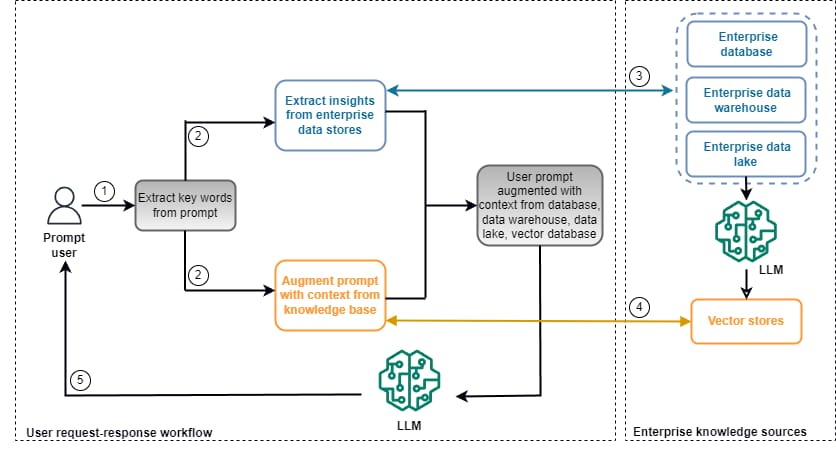

AIOps, which stands for AI for IT operations, uses machine learning to continuously collect and analyze telemetry data from your storage systems, servers, and networks. It does not just monitor. It detects anomalies, predicts capacity shortfalls, and tells your team what to do before a problem costs you time.

What makes this genuinely different from older storage resource management software is the layer of generative AI now being added on top. Some platforms let your admins query storage health in plain English, generate reports on demand, and receive guided recommendations for fixing configuration issues. That changes the entire workflow dynamic.

Stop Firefighting. Start Predicting.

You need more than alerts. You need a platform that thinks ahead so your team can focus on strategy rather than incident response. If your organization is ready to stop reacting and start operating with real-time storage intelligence, book a personalized demo with DataManagement.AI and see it in action.

The business case for this shift is clear. By removing manual tasks from storage operations, AI systems free your IT staff to work on higher-value projects. This translates into measurable labor savings, faster resolution times, and fewer unplanned outages. For enterprises running at scale, those gains compound quickly over time.

AI-powered storage tools also bring intelligent data tiering into the picture. Instead of your team deciding where to place data manually, the system analyzes workload patterns and automatically moves data to the most cost-effective storage tier. You get better performance without paying for premium storage across the board.

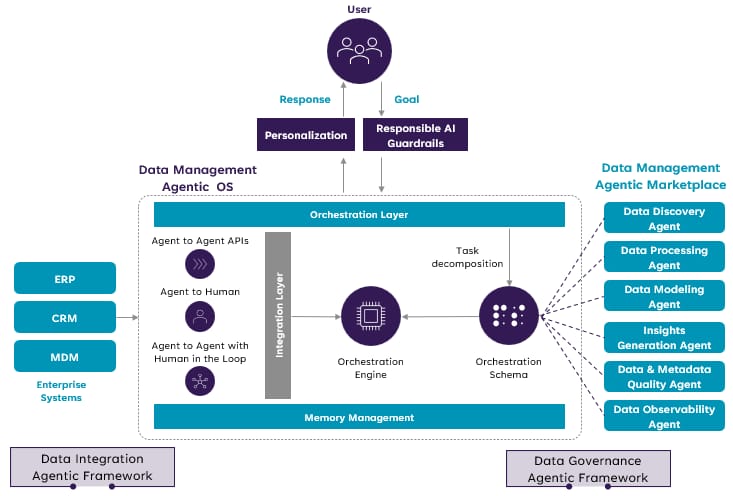

Agentic AI is the next evolution here. Rather than just recommending actions, agentic AI platforms can execute them on your behalf based on policies you set. Think of it as giving your storage environment a trained operator that works around the clock and never misses a step or delays a decision.

When Storage Management Becomes a Security Layer

One area gaining real traction is using AI storage intelligence as a security tool. Modern platforms can detect patterns that look like ransomware activity, flagging unusual throughput or access behavior before it escalates. Your storage layer is no longer just a cost center. It is now a front-line risk monitor.

AI also enhances backup and recovery planning by identifying your most critical data automatically and prioritizing its protection. In the event of a failure, that same intelligence guides recovery sequencing so the most business-critical workloads return first. That is a resilience capability most enterprises are still trying to build manually.

There is a governance dimension to be aware of as well. AI storage tools raise valid questions about model transparency, data residency, and regulatory compliance, especially in industries like finance and healthcare. Before you adopt, you need to know how the system makes its decisions and where your data actually goes.

Looking ahead, AI in storage will not remain a differentiator for long. It will become the baseline expectation. Organizations that invest now build the operational muscle and data foundation to compete effectively. Those who wait will face both a skills gap and a structural cost disadvantage that they cannot easily reverse.

The bottom line for your storage strategy is

Intelligent automation is not a luxury feature. It is the operational foundation of a modern IT organization. As AI workloads grow and data volumes scale, the gap between manual and AI-managed storage will widen at a pace that makes waiting very costly.

Your Pen Test Is Too Slow. AI Just Changed That.

Security testing has always faced the same constraint: it is too slow, too expensive, and too infrequent to keep pace with how fast your attack surface changes. A new generation of AI-powered tools is reshaping that equation. Continuous automated validation is now genuinely within reach for enterprise security teams.

Consider what a framework like Zen-AI-Pentest represents. It orchestrates the full cycle of a penetration test using autonomous agents: reconnaissance, vulnerability scanning, exploit validation, and reporting. The AI component guides which tools to deploy and in what sequence, compressing what used to take days into a significantly shorter operational window.

Faster Tests, Smarter Coverage, Fewer Surprises

Your security team cannot afford to test once a quarter and hope nothing slips through. With AI-powered penetration testing, you run structured assessments more frequently without adding headcount. The AI interprets findings, recommends next steps, and plugs directly into your existing DevOps pipelines, turning security validation into a built-in workflow gate rather than a last-minute check.

You get risk scores powered by CVSS and EPSS, so your team always knows what to fix first. Sandboxed exploit validation means you capture real evidence without ever touching your production environment, removing the operational risk that has traditionally made automated testing feel too dangerous to deploy at scale.

Think of it as giving your security program an acceleration layer. Your practitioners still make the calls. They just spend less time on volume and more time on judgment. The one condition that makes all of this work is your data foundation. If your data is unclassified and siloed, your security tools cannot protect what they cannot see. Clean, governed data is not just good hygiene. It is what makes every intelligent security layer you build actually perform.

The Enterprise AI Race Is Exposing Who Is Ready and Who Is Not

The deal-making between OpenAI and major private equity firms is not just a story about venture capital. It is a strategic signal about how enterprise AI is being sold, distributed, and scaled. Understanding what is happening here can sharpen your own thinking about platform choices and AI program readiness.

OpenAI is reportedly offering private equity firms a guaranteed minimum return of 17.5% in exchange for participation in a joint venture. The goal is to rapidly deploy AI tools across the hundreds of established companies these firms own, converting broad market access into deep enterprise adoption and customer lock-in at scale.

Anthropic is pursuing a similar strategy, seeking private equity partnerships to deploy Claude across its portfolio companies, though it does not guarantee a return.

Both companies are eyeing public listings as early as this year. Enterprise distribution has become the central battleground as both firms race toward scale and market credibility.

What This Means For Your AI Buying Decision

Here is the practical implication for you. If your organization is still evaluating enterprise AI, the pace of external pressure is about to increase. Vendors are not waiting. They are building structural distribution advantages that will define switching costs and integration depth for years to come.

The race to lock in enterprise customers is driven by a simple insight: once a customized AI model is embedded in your workflows, moving away becomes expensive and disruptive. What looks like a capital markets story on the surface is actually a long-term product distribution and retention strategy.

Adoption figures help put this in context. OpenAI currently holds a broad enterprise lead with about 35.8% paid AI adoption among businesses. Anthropic trails at around 14.3% but is gaining quickly, with reported annual run-rate revenue of $14 billion and a three-year growth rate exceeding tenfold. The gap is closing fast.

For digital leaders making platform decisions, the competition is actually useful. It is creating pressure on both providers to deliver deeper enterprise functionality, better compliance tooling, and more customization options. But it also means the window for deliberate, structured adoption is narrowing. Reactive decisions made under pressure rarely produce durable outcomes.

Your Data Readiness Determines Your AI ROI

Here is what most vendor conversations skip entirely. It does not matter whether you choose OpenAI or Anthropic if your underlying data is fragmented and untrustworthy. Models do not fix bad data. They amplify it.

The real competitive advantage in an AI-first market is not which platform you pick. It is how clean, structured, and governed your data is before you deploy anything. That preparation is what separates organizations that extract ROI from those that just accumulate underperforming technology investments.

The market is already asking the harder question. Not whether AI creates value, but whether your organization is actually ready to capture it.

There is a bottleneck in enterprise AI that most roadmap discussions fail to address. It is not a shortage of GPU power. It is the speed at which data moves between compute units and memory. As AI models grow larger and more complex, interconnect bandwidth becomes the actual constraint.

This is what Kandou AI's recent $225 million Series A funding round is really about. The Swiss startup has developed patented copper-based interconnect technologies specifically designed to move data faster between chips at a fraction of the cost and power consumed by traditional fiber-based connectivity in AI data centers.

The company's insight is that traditional networking infrastructure was designed for a pre-AI world. GPUs now need to connect to exponentially larger memory footprints, and older interconnect designs cannot keep pace. The performance ceiling is no longer about how fast you can compute. It is about moving data efficiently.

Infrastructure Debt Is the Quiet Killer of AI Programs

Your AI program does not fail loudly. It stalls quietly, through performance gaps at scale, compute costs that keep climbing, and models that never quite deliver what the pilot promised. If your data center was built before large-scale AI workloads existed, you are already running on borrowed time.

The real bottleneck is not GPU power. It is how fast data moves between the compute and the memory. When that pipeline cannot keep up, you overprovision hardware to compensate, and every AI workload you run costs more than it should. That is infrastructure debt showing up directly on your budget.

Kandou AI just raised $225 million to solve this at the chip level. Investors backed by SoftBank, Synopsys, and Cadence are not making speculative bets. They are signaling that infrastructure readiness is now the defining variable in enterprise AI success.

You cannot defer this while you focus on model selection. The data movement layer of your AI stack needs the same strategic attention you give your software decisions.

The Thread Running Through All Four Shifts

These four market shifts share a common thread. Whether you are modernizing storage operations, automating security workflows, navigating the AI platform race, or preparing infrastructure for scale, the requirement is the same: your data environment needs to be clean, observable, and ready to support intelligent decision-making.

The organizations winning right now are not necessarily the ones with the most advanced AI models. They are the ones with the strongest operational foundations. Smarter storage, proactive security, reliable data pipelines, and visible infrastructure are not supporting acts. They are the main event in any successful digital transformation program.

You do not have to solve everything at once. But you need to start moving with intention. The gap between organizations building smart data foundations today and those that are not will be very difficult to close later. What you do in the next twelve months matters enormously.

Your AI strategy isn’t failing - your data foundation is. Fix it before it costs you more.

Journey Towards AGI

Research and advisory firm guiding industry and their partners to meaningful, high-ROI change on the journey to Artificial General Intelligence.

Know Your Inference Maximising GenAI impact on performance and Efficiency. | Model Context Protocol Connect AI assistants to all enterprise data sources through a single interface. |

Your opinion matters!

Hope you loved reading our piece of newsletter as much as we had fun writing it.

Share your experience and feedback with us below ‘cause we take your critique very critically.

How's your experience? |

Thank you for reading

-Shen & Towards AGI team