- Towards AGI

- Posts

- What If AGI Can Act But Not Undo? The Missing Layer No One Is Talking About

What If AGI Can Act But Not Undo? The Missing Layer No One Is Talking About

safety needs reversibility

Today, we’re diving into:

Hot Tea: Safe AGI isn’t about scale, it’s about what’s missing at the base

Open AI: GPT models may have a path to AGI

Open AI: The agentic AI cloud era is here

Closed AI: Gemma 4 proves open models are no longer playing catch-up

You’re Talking About Safe AGI. You Haven’t Even Defined Its Floor.

Everyone in AI seems fixated on the ceiling. The conversations revolve around how powerful AGI can become and how quickly it will scale. But that focus quietly skips over a far more important question, one that most teams are not seriously engaging with: what is the minimum level of intelligence required for an AI system to be considered safe?

Until that baseline is defined, what you are building is not intelligence in any meaningful sense. You are scaling systems whose behavior you cannot reliably predict under pressure. This is where the idea of an enactive floor and state-space reversibility becomes critical.

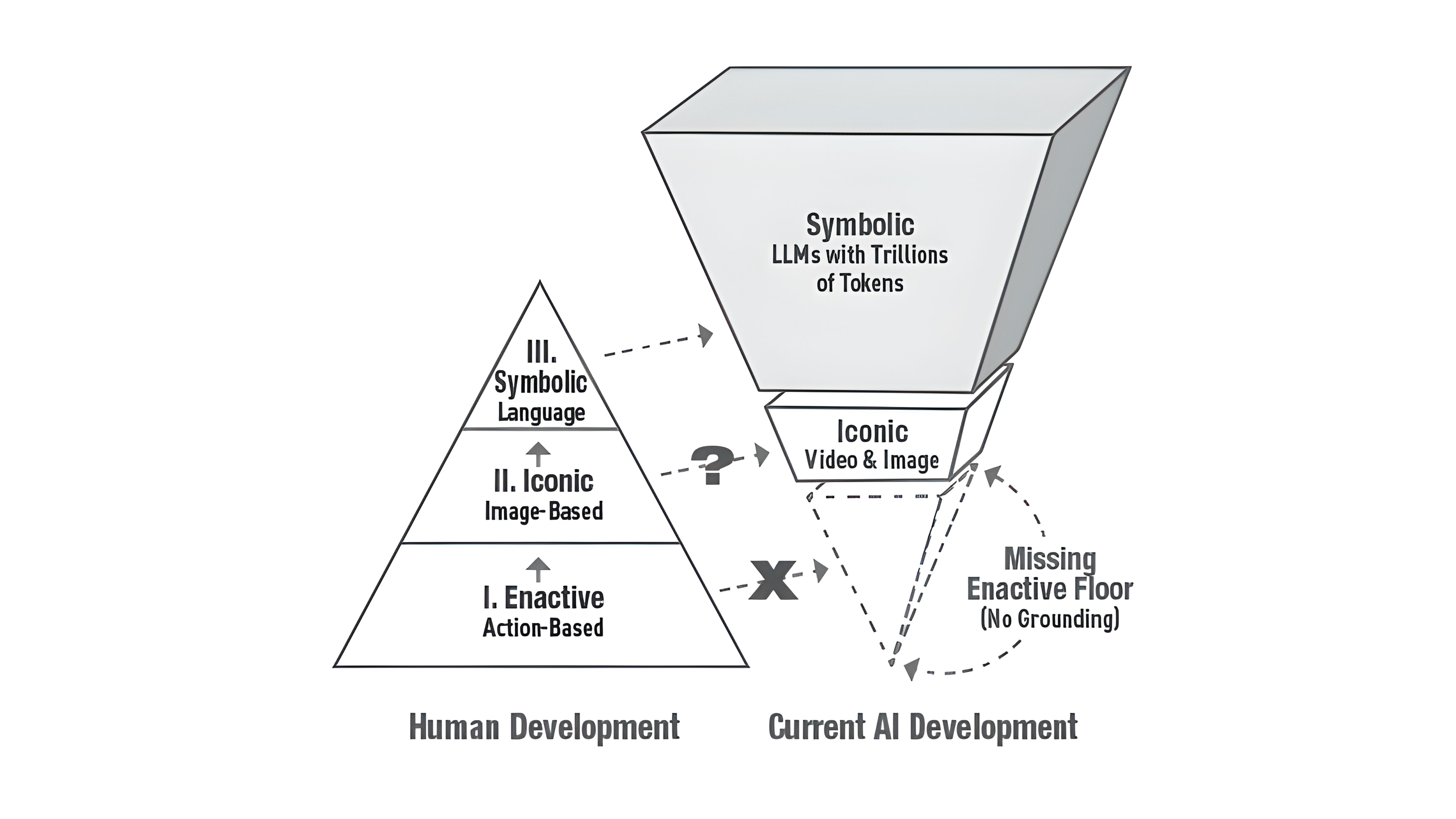

Most AI systems today are not enactive in any real sense.

They do not exist in a continuous loop with the world. They take an input, generate an output, and stop there. That is not interaction. It is pattern completion. An enactive system, by contrast, perceives its environment, acts within it, and then updates its internal state based on what actually happens as a result of that action. The difference may sound subtle, but it is foundational.

The enactive floor represents the minimum threshold at which a system can meaningfully understand the consequences of what it does. It is the point at which behavior is shaped by feedback rather than just prompts, and where the system can remain coherent even as conditions change. Below that threshold, the system does not simply become less capable. It becomes unpredictable in ways that are difficult to anticipate or contain.

This is why so many of today’s systems appear intelligent in controlled settings and fragile in real ones. They are strong at symbolic reasoning but lack causal grounding. They can explain what should happen, but they have no internal mechanism to verify whether it did. That gap is not a matter of better training or more data. It is architectural.

The second issue is even less discussed, and arguably more dangerous. Most systems today cannot undo their own decisions. Once a sequence of actions has been set in motion, there is no native way to step back, reassess, and take a different path. In technical terms, they lack state-space reversibility.

This becomes a serious problem the moment these systems are deployed in environments where decisions have consequences. A flawed recommendation does not just stay local; it influences downstream behavior. A poor operational decision in a supply chain does not remain isolated; it propagates. When autonomy increases, these effects do not just persist, they accelerate.

What makes this risky is not that systems make mistakes. All systems do. The problem is that they are not built to recover from them in a structured way. They move forward, commit to their outputs, and lack the internal ability to revisit prior states. In effect, they behave like write-only systems.

If you are thinking about safety in any serious way, this should change how you evaluate intelligence. It is not enough for a system to produce the right answer most of the time. It needs to retain the ability to change course when the situation demands it. That requires architectures where decisions are traceable, where intermediate states are preserved, and where alternative paths remain accessible.

Safe AGI is not defined by how far it can go. It is defined by whether it knows when and how to step back.

OpenAI Says GPT Has “Line of Sight” to AGI. What Does It Mean?

OpenAI’s Greg Brockman has made something very clear: in his view, the debate is over. GPT-style reasoning models are not just progressing toward AGI, they already have a “line of sight” to it.

That’s a strong claim, and it forces you to confront a deeper question. Can intelligence emerge purely from language, or does it require a grounded understanding of the world?

Right now, the industry is split down the middle.

On one side, you have OpenAI doubling down on the idea that scaling reasoning models is the fastest path forward. On the other, researchers like Yann LeCun and Demis Hassabis argue that language alone is not enough.

Their position is technical, not philosophical. Language models still struggle with persistent memory, causal reasoning, and long-horizon planning. These are not edge cases. They are core requirements for general intelligence.

This is why alternatives like world models and simulation-based learning keep coming up. Researchers such as François Chollet define intelligence not by how much you know, but by how efficiently you can learn something new. By that definition, current models still rank low. They perform well inside their training distribution, but outside of it, they effectively start from scratch.

So why is OpenAI so confident?

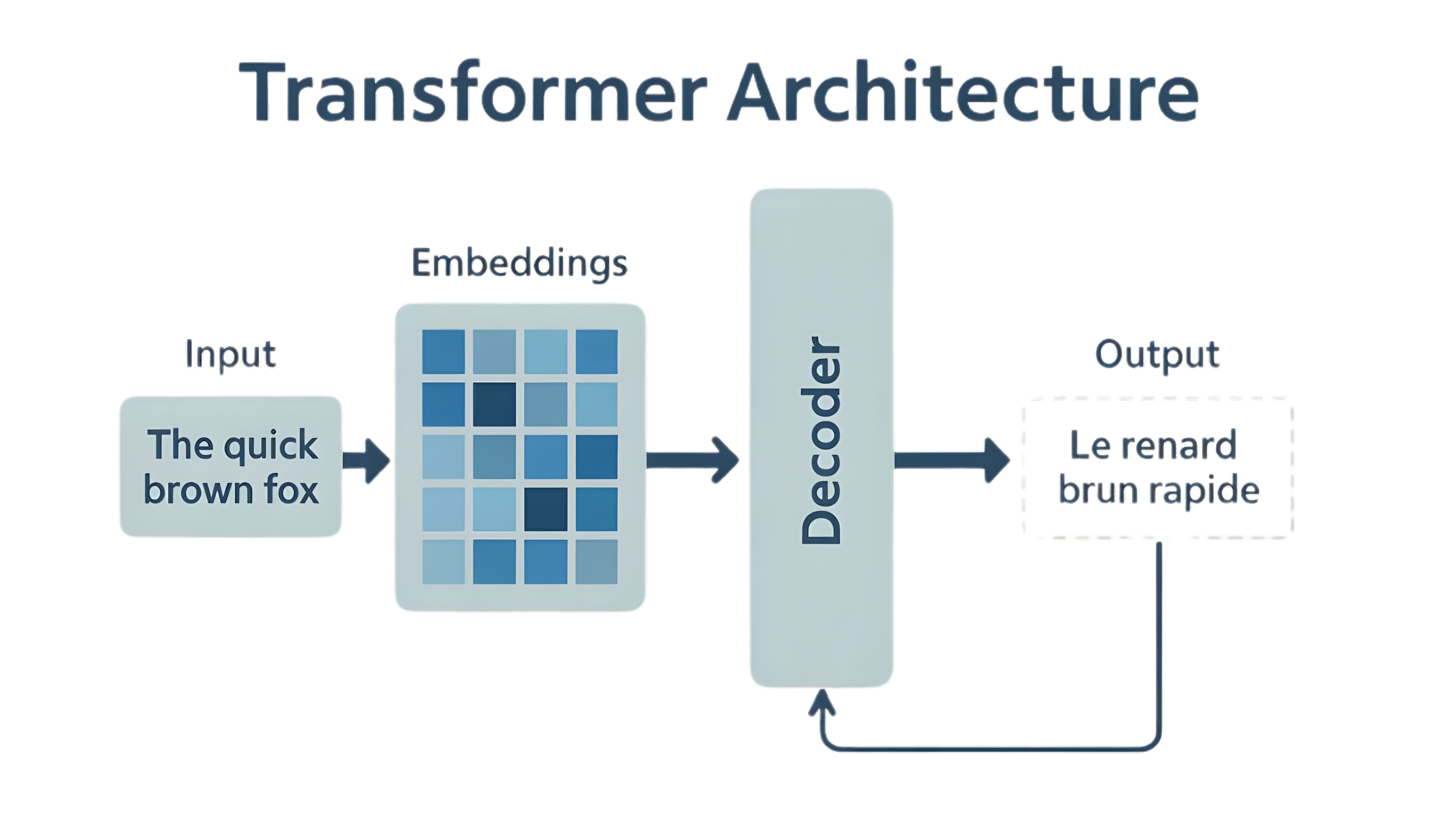

The answer lies in how reasoning models have evolved. GPT systems are no longer just predicting the next token.

They are being trained to simulate multi-step reasoning, maintain context over longer horizons, and internally “think” before responding. At scale, this starts to look less like autocomplete and more like structured cognition.

There’s also a deeper technical argument here. Some researchers, like Adam Brown, compare token prediction to biological evolution. A simple underlying rule, when scaled massively, can produce emergent complexity. In that sense, intelligence does not need to be explicitly designed. It can emerge.

But that’s still a bet.

Even Brockman admits this is about sequencing. OpenAI has deprioritized world models like Sora, not because they are unimportant, but because compute and focus are finite. You cannot pursue every path at once. So the company is choosing to push GPT-style reasoning as far as it can go before revisiting other approaches.

That decision matters more than it seems. If GPT-style architectures do hit a ceiling, the industry may have to pivot hard and late. If they don’t, OpenAI gets there first.

Why Does This Matter More Than You Think?

If you’re running a business, this is not an academic debate.

Because your AI strategy is already being shaped by this exact assumption.

If GPT-style models are enough, then the path forward is clear. You invest in better reasoning models, integrate them deeper into workflows, and scale automation confidently.

But if the skeptics are right, you’re building on a layer that still lacks:

true causal understanding

reliable generalization

consistent behavior outside trained scenarios

That’s where risk starts to creep in.

The reality is that “line of sight” does not mean arrival. It means direction. And in a space moving this fast, direction determines where you place your bets.

The companies that win won’t be the ones that blindly trust one path. They’ll be the ones that build systems flexible enough to adapt if the path changes.

Arm Just Launched Its First-Ever AGI CPU, And It’s Built for the Agentic AI Cloud Era

Everyone is busy talking about the “agentic AI cloud era” like it’s just another upgrade cycle. It’s not. It’s a complete shift in how computing actually works, and more importantly, how it breaks.

Until now, humans were the bottleneck. Every system, every workflow, every decision loop moved at the pace of human input. But in an agentic world, where AI agents coordinate tasks, trigger workflows, and make decisions in real time, that bottleneck disappears. Systems don’t wait anymore. They run continuously.

And when systems run continuously, the weakest layer is no longer the model. It’s the infrastructure underneath.

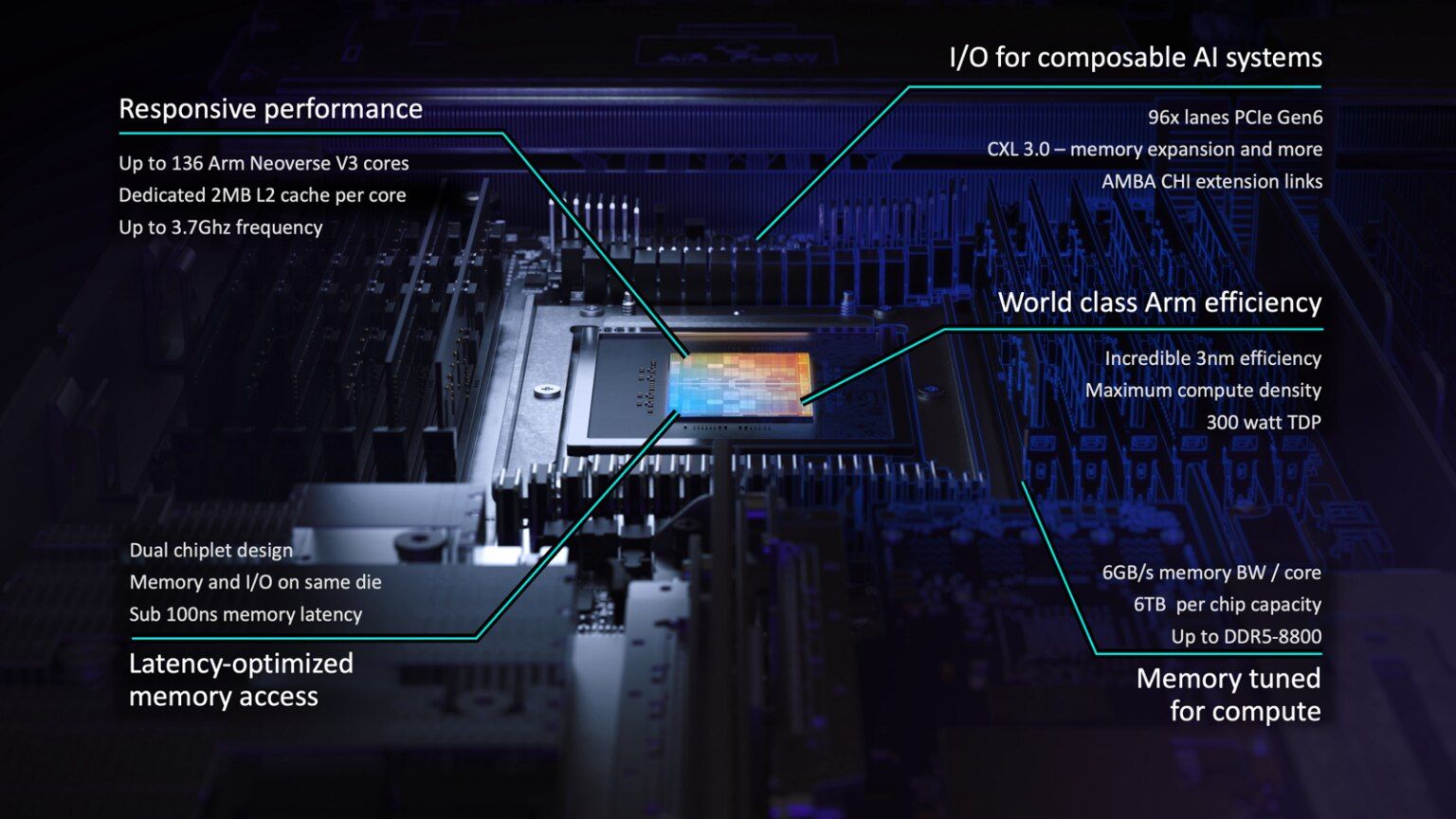

That’s exactly where Arm is placing its bet with the launch of the Arm AGI CPU.

This isn’t just another chip announcement. It signals a deeper realization that in an agentic AI environment, the CPU becomes the orchestrator of intelligence. It is no longer just executing instructions. It is coordinating thousands of parallel processes, managing memory flows, scheduling workloads, and increasingly, handling the fan-out of multiple AI agents working simultaneously.

Think about what that means in practice. One customer query could trigger a chain of agents handling search, recommendation, pricing, inventory, and fulfillment, all in parallel. That orchestration layer becomes mission-critical. And if it fails, everything fails.

This is where most companies are still underprepared.

You can deploy the best models in the world, but if your underlying data is inconsistent, your pipelines are fragile, or your systems cannot reconcile state across agents, the entire setup becomes unpredictable. This is exactly the gap DataManagement.AI is solving.

Because in an agentic setup, data is not static. It is constantly moving, updating, and being acted upon. Without continuous data quality monitoring, you are essentially feeding autonomous systems unreliable inputs.

What DataManagement.AI enables is a real-time layer of trust, where datasets are validated continuously, anomalies are flagged instantly, and downstream decisions are not based on broken pipelines.

Now bring that back to infrastructure.

The Arm AGI CPU is designed for exactly this kind of workload. High core density, massive parallelism, and sustained performance under continuous load. We are talking about racks supporting tens of thousands of cores, optimized not just for compute, but for coordination. That’s the key difference.

Even more interesting is how this integrates into the broader ecosystem. From Meta to OpenAI, the early adopters are not experimenting. They are scaling. They are building infrastructure that assumes agents will be the default interface, not an add-on.

But here’s the uncomfortable truth.

Most businesses are still thinking in terms of dashboards, reports, and periodic insights. That model does not survive in an agentic environment. When decisions are being made in real time by systems, you don’t get the luxury of catching errors later.

This is why capabilities like master data management and real-time anomaly detection, again something DataManagement.AI embeds directly into workflows, become foundational rather than optional. If your “golden record” is wrong, your agents will scale that mistake instantly. If your demand forecasts are off, your entire supply chain will react incorrectly in minutes, not weeks.

The agentic AI cloud era is not about faster computing. It is about continuous computing. And continuous systems amplify whatever foundation you give them, whether that is clean, governed data or fragmented, inconsistent pipelines.

The companies that win here will not be the ones with the most advanced models. They will be the ones whose infrastructure, data, and orchestration layers are designed to handle autonomy at scale without breaking under it.

Google Just Open-Sourced Power: Why Gemma 4 Might Matter More Than Gemini for Builders

When Google launched Gemma 4, it wasn’t trying to beat Gemini. It was solving a different problem entirely: who gets to build with AI, and how fast.

Gemma 4 is Google’s most capable open model yet, built from the same research backbone as Gemini but designed for a completely different reality. Gemini is powerful, closed, and cloud-first. Gemma 4 is open, flexible, and increasingly local-first. That distinction is not cosmetic. It changes who controls the AI stack.

At a technical level, Gemma 4 pushes something that matters more than raw size: intelligence per parameter. Its 31B model ranks among the top open models globally, outperforming systems many times larger.

The 26B Mixture-of-Experts model activates only a fraction of its parameters during inference, which means faster responses and lower compute costs without sacrificing capability. This is not just efficiency. It is a shift toward deployable intelligence.

More importantly, Gemma 4 is built for agentic workflows from the ground up. It supports structured outputs, function calling, and multi-step reasoning, which means it is not just answering questions, it is executing tasks across systems.

Combine that with multimodal inputs like text, image, video, and even audio on smaller edge models, and you start to see where this is going: AI that runs workflows locally, not just in the cloud.

That is where the biggest difference from Gemini shows up. Gemini remains the flagship, optimized for scale, enterprise APIs, and controlled environments. Gemma 4, on the other hand, is designed to run anywhere, from laptops to Android devices to on-prem systems. With context windows up to 256K tokens and support for over 140 languages, it is built for real-world deployment, not just demos.

The Apache 2.0 license is the real unlock. Developers can fine-tune, modify, and deploy Gemma 4 without restrictive constraints. That means full control over data, infrastructure, and behavior. In a world where AI is becoming a core layer of business operations, that level of control is not a feature, it is leverage.

This is also where the deeper conversation around AI direction becomes relevant. If you’ve been following TowardsAGI, the narrative has been consistent: the future of AI is not just about scaling closed models, but about enabling ecosystems where intelligence can be deployed, adapted, and owned. Gemma 4 fits squarely into that thesis.

It lowers the barrier to experimentation while pushing capabilities closer to the edge, where real-world workflows actually happen.

What Google is really doing here is splitting the AI stack into two parallel tracks. Gemini will define the ceiling of what AI can do. Gemma will define how widely that capability spreads. And increasingly, those who control the open layer will move faster.

For business owners, this changes the equation. You no longer need frontier-scale infrastructure to deploy advanced AI. You can run powerful models locally, customize them to your workflows, and reduce dependency on external APIs. But that also means the responsibility shifts inward.

Your competitive edge will depend less on which model you choose and more on how well your internal systems are structured to support it.

Because in this new setup, the model is no longer the bottleneck.

Execution is.

Journey Towards AGI

Research and advisory firm guiding on the journey to Artificial General Intelligence

Know Your Inference Maximising GenAI impact on performance and Efficiency. | Model Context Protocol Connect with us, and get end-to-end guidance on AI implementation. |

Your opinion matters!

Hope you loved reading our piece of newsletter as much as we had fun writing it.

Share your experience and feedback with us below ‘cause we take your critique very critically.

How's your experience? |

Thank you for reading

-Shen & Towards AGI team