- Towards AGI

- Posts

- New AI Benchmark Revealed: We’re Still Nowhere Near AGI

New AI Benchmark Revealed: We’re Still Nowhere Near AGI

is that an issue?

Today, we’re diving into:

Hot Tea: ARC-AGI-3 shows humans still outperform AI systems

Open AI: Google signals the real future of offline intelligence

Open AI: AI is rewiring trade sales into data systems

New Report: We Are Still Far From AGI

The conversation around AGI often moves faster than the underlying evidence. Every new model release tends to bring renewed claims of proximity to AGI, but a new benchmark from the ARC Prize Foundation introduces a more grounded and technically rigorous perspective on where we actually stand.

The ARC team’s latest evaluation, called ARC-AGI-3, is designed to measure something fundamentally different from traditional AI benchmarks. Instead of testing whether models can solve fixed datasets or reproduce learned patterns, it evaluates whether an AI system can operate in completely unfamiliar environments without any prompts, instructions, or predefined goals. The system is placed into turn-based scenarios where it must infer structure, determine objectives, and develop strategies purely through interaction.

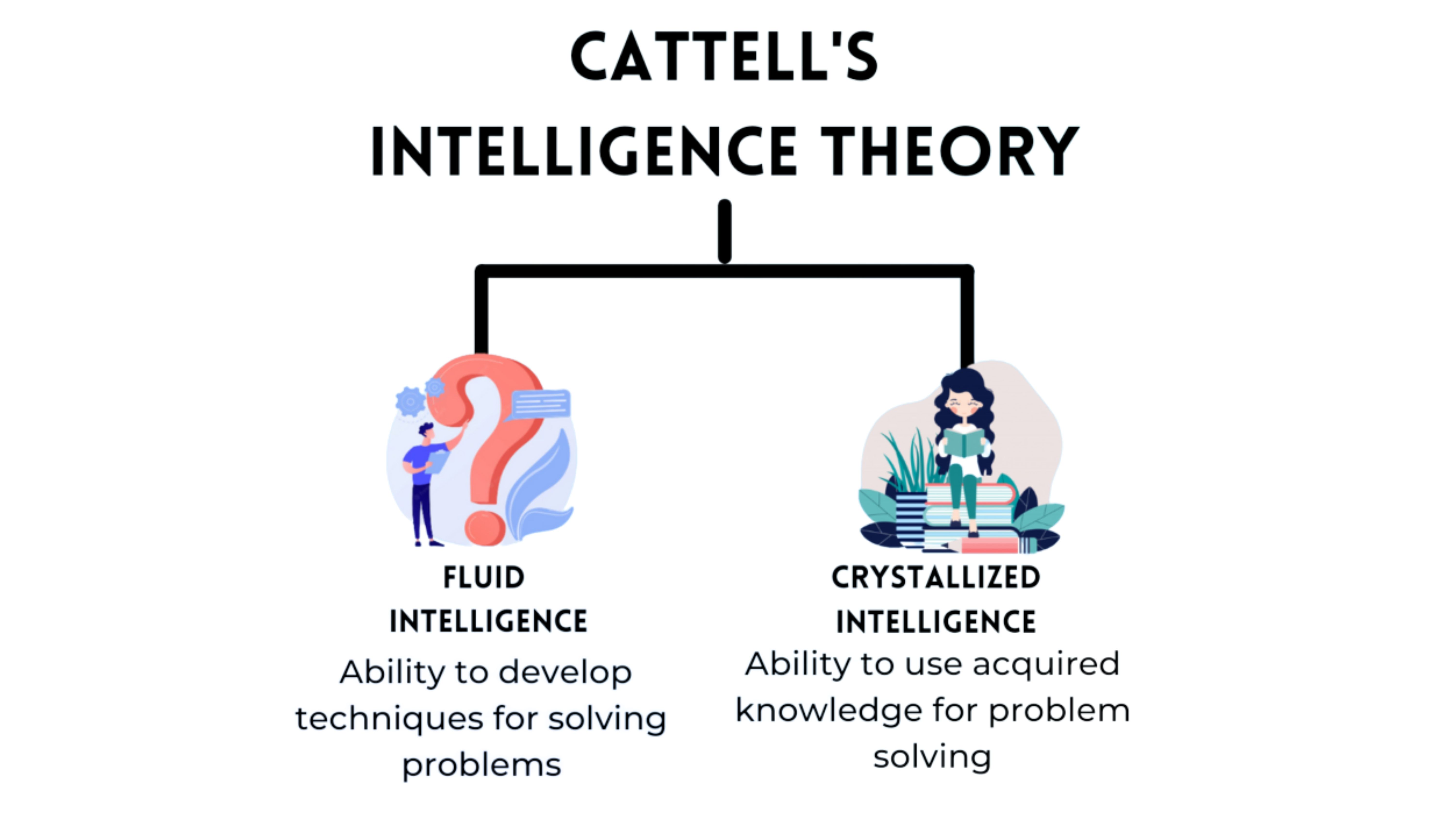

This design directly targets what researchers call fluid intelligence, which is the ability to adapt to new problems without relying on prior exposure. Unlike conventional benchmarks that can be partially solved through large-scale memorization or statistical generalization, ARC-AGI-3 deliberately removes any opportunity for training leakage. The environments are kept private, and brute-force search strategies are penalized, making it closer to a real-world test of autonomous reasoning.

The results highlight a significant gap between human cognition and current frontier models. A perfect score represents human-level performance across all environments, yet no system comes close. According to the leaderboard, GPT-5.4 reaches approximately 0.3 percent, while Gemini 3.1 Pro and Opus 4.6 both sit around 0.2 percent. Grok 4.20 records essentially zero meaningful progress. In contrast, human testers are able to solve all environments on their first attempt, which reinforces how large the gap still is in general problem-solving ability.

What makes this benchmark particularly important is that it strips away the advantages modern models typically rely on. Large language models are extremely effective at pattern completion and contextual prediction, but ARC-AGI-3 does not provide patterns to complete. It requires agents to construct internal models of unfamiliar systems and continuously update them through interaction. In practical terms, it tests whether a system can behave like an adaptive problem solver rather than a sophisticated autocomplete engine.

From a technical standpoint, this exposes a limitation that scaling alone has not resolved. Increasing parameter counts or training data improves performance on known distributions, but it does not reliably translate into the ability to form strategies in novel environments. This distinction is critical because it suggests that current architectures are still optimized for interpolation rather than true extrapolative reasoning.

In my view, ARC-AGI-3 is less a prediction tool for AGI timelines and more a calibration instrument for the field. It forces a correction in how capability is interpreted. High benchmark scores in language tasks are often mistaken for general intelligence, but this evaluation shows that such performance does not necessarily transfer to autonomous learning scenarios.

The implication is not that AI progress is slowing, but that it is structurally uneven. Systems today are highly capable within defined workflows and tool-augmented environments, but they are not yet reliable autonomous agents that can operate without scaffolding. This creates a clear strategic boundary.

The advantage does not come from waiting for AGI, but from designing processes that leverage strong narrow intelligence while accounting for its limitations in adaptability and independent reasoning.

Google’s Offline AI Move Signals the Real Future of Mobile Intelligence

The industry has long assumed that meaningful artificial intelligence must operate through the cloud, but Google’s new offline-first application, AI Edge Eloquent, challenges that assumption in a very direct way by moving core intelligence to the device itself.

The app is built on the Gemma architecture and reflects a broader shift in how lightweight, task-specific models are being deployed outside of data center environments. While large systems like Gemini depend on distributed compute infrastructure with high parameter counts and continuous connectivity, AI Edge Eloquent demonstrates that not all intelligence requires that level of scale or external dependency.

The app runs speech recognition and language refinement entirely on-device. Instead of streaming audio to the cloud for processing, it performs local inference to convert speech into structured text while simultaneously filtering disfluencies, correcting grammar patterns, and inferring punctuation. The output is then optimized for immediate reuse across applications through clipboard-level integration, which effectively turns the device into a self-contained transcription and rewriting system.

What makes this system notable is its separation of concerns. Cloud-based models are still necessary for general-purpose reasoning and broad contextual intelligence, but AI Edge Eloquent shows that narrow, high-frequency tasks can be decomposed into efficient edge models. This reduces latency, improves privacy, and removes dependency on network availability, which becomes critical in real-world conditions where connectivity is inconsistent.

The personalization layer is also technically significant. The app adapts to user-specific vocabulary by learning from corrections and optionally integrating contextual signals such as Gmail history, which improves named entity recognition and domain-specific language modeling. This creates a lightweight on-device adaptation loop without requiring centralized retraining.

In my view, AI Edge Eloquent represents a quiet but important architectural shift in AI systems. The future is not defined by a single dominant model but by a layered intelligence stack where cloud models handle general reasoning while edge models execute deterministic, context-aware tasks locally. This separation is what will make AI feel truly usable in everyday environments rather than remaining dependent on ideal network conditions.

How AI Is Quietly Rewiring General Trade Sales

AI is increasingly moving beyond experimentation and into the core mechanics of revenue generation, and a new report from the BCG suggests that general trade sales could see a structural uplift of 15 to 20% when AI is embedded across planning, execution, and operations.

What makes this shift operationally viable is not just model capability but the ease with which AI systems can now integrate into existing enterprise workflows, something DataManagement.AI is focusing on by simplifying how fragmented sales and operational data gets connected into a single decision layer without requiring heavy system overhauls.

The underlying transformation is less about automation in isolation and more about consolidating scattered enterprise signals into a unified intelligence layer. Traditional trade sales systems rely heavily on structured inputs such as billing records and loyalty data, but modern AI systems extend this by ingesting unstructured signals like store images, handwritten notes, and recorded sales conversations.

When these streams are connected effectively through workflow integration layers, they create a continuous feedback loop that allows models to move from descriptive analytics to prescriptive decision-making.

At the operational level, AI is being deployed in two distinct but interconnected layers. The first is the sales intelligence layer, where AI-powered assistants help field representatives optimize route planning, retrieve historical retailer interactions, and generate context-aware pitch recommendations for each outlet.

The second is the autonomous engagement layer, where 24/7 digital sales agents handle recurring interactions such as order capture through voice in local languages, query resolution, and payment reminders, ensuring continuity even outside working hours.

DataManagement.AI is positioned to ensure these capabilities plug into existing CRM and ERP systems without disrupting established field workflows.

The most significant shift, however, is occurring in backend operations, where AI is being applied to automate billing reconciliation, scheme settlement, financial validation, and query resolution. These are traditionally high-friction processes that depend on manual coordination across teams.

When integrated properly into enterprise workflows, these systems reduce operational overhead while improving accuracy, freeing up human teams to focus on higher-value commercial strategy and relationship building.

What stands out in the BCG analysis is the idea of leapfrogging maturity stages. As highlighted by Parul Bajaj from BCG’s marketing and sales practice, organisations no longer need to gradually digitize their sales infrastructure.

Instead, they can transition directly into AI-enabled operating models if the underlying data and workflows are connected effectively, which is where integration-first approaches become critical.

From a systems perspective, this represents a shift in how go-to-market strategies are executed. Micro-market identification using location data, footfall analysis, and store profiling allows sales decisions to be made at the level of individual outlets rather than broad regional assumptions.

The companies that will benefit most are not just those adopting AI tools, but those building integrated, workflow-native systems where intelligence is continuously embedded into execution rather than layered on top of it.

In my view, the real inflection point is not the projected 15 to 20% revenue uplift but the restructuring of decision-making itself. As AI begins to recommend what to sell, where to sell, and when to engage, the role of human teams shifts toward supervising interconnected systems rather than manually driving them.

The winners in this phase will be organizations that treat AI not as a tool, but as an integrated operational fabric across their entire sales architecture.

Journey Towards AGI

Research and advisory firm guiding on the journey to Artificial General Intelligence

Know Your Inference Maximising GenAI impact on performance and Efficiency. | Model Context Protocol Connect with us, and get end-to-end guidance on AI implementation. |

Your opinion matters!

Hope you loved reading our piece of newsletter as much as we had fun writing it.

Share your experience and feedback with us below ‘cause we take your critique very critically.

How's your experience? |

Thank you for reading

-Shen & Towards AGI team