- Towards AGI

- Posts

- AI Rewrote the Rules Overnight. Did You Notice?

AI Rewrote the Rules Overnight. Did You Notice?

There is one catch.

Today, we’re diving into:

AI news: AI exposed your team's 25% daily waste

Hot Tea: Free frontier AI your devs aren't using yet

OpenAI: AI rewrote its own code. Beat every engineer.

Closed AI: $122B raised. Your AI strategy is already late.

Your Employees Are Wasting 25% of Their Day. AI Just Exposed Why.

Here is what MIT's research tells you about where your team's hours are really going, and what to do about it right now.

24.9%, Drop in project management time after AI adoption, per MIT Sloan research (2026)

Think of your senior developer. She is smart, experienced, and expensive. But right now, she is not writing code. She is reviewing a pull request, chasing a status update, and sitting in a sprint planning call she did not need to attend.

This is not a culture problem. It is a workflow problem. And MIT Sloan research confirms it is happening inside most companies that have not yet made AI part of how work actually gets done.

The Real Reason Your Skilled Hires Are Doing Low-Value Work

Researchers at MIT tracked 187,000 software developers over three years. Before AI tools, developers spent only 44% of their time on coding. The rest went to coordination, reviews, and admin overhead.

When those same developers got access to generative AI, core coding time rose by 12.4%. Project management tasks fell by nearly 25%. The shift was not temporary. It held over the long term and showed no signs of reverting.

The takeaway is direct. When AI handles the friction, your people move toward the work that actually creates value. The problem is that most companies are still running on workflows built before these tools existed.

Junior Talent Suffers the Most Without This Fix

The MIT study found that less-experienced employees saw the largest gains from access to AI. They coded more, learned faster, and built skills at a pace their predecessors never could.

Cutting entry-level roles to save money while ignoring this is what MIT researchers called a "profound strategic error." The companies winning right now are the ones investing in AI-augmented junior teams, not eliminating them.

The Collaboration Trap You Need to Watch

There is one catch that the research flags clearly. When developers used AI more, peer collaboration dropped by nearly 80%. Less time debating bad code is good. But losing the human exchange that drives innovation and culture is a real risk.

Your job is not to hand everything to AI. It is to use AI to remove the overhead so your team can collaborate where it matters, not where it is forced to.

Google Just Handed Your Dev Team a Free Frontier AI Model. Are You Actually Using It?

Gemma 4 is live, open source, and running on consumer hardware right now. Here is what it means for your product roadmap and your competitors who are already moving.

Downloads since launch: 400M+ Gemma models, all generations

Your Competitors Got a Free Upgrade. Here Is What They Just Unlocked.

Google released Gemma 4 on April 2, 2026, under the Apache 2.0 license. That means any company, anywhere, can download it, modify it, ship it inside their product, and owe Google nothing except attribution.

No subscriptions. No usage fees. No vendor lock-in. If a competing team inside your industry spun up a product AI feature last week, there is a very real chance this is what they used to do it.

Why This Release Is Different From Every Gemma Before It

Previous Gemma versions were open-weight but still governed by Google's own usage terms. You could download the model, but redistribution came with restrictions. Gemma 4 removes that entirely.

Under Apache 2.0, your team can embed this model into a client-facing product, fine-tune it on your proprietary data, and redistribute the result without a single royalty. That changes the build-versus-buy calculation for almost every AI feature on your roadmap.

#3 Gemma 4's 31B model on the Arena AI global text leaderboard, beating models 20x its size

You Can Run Frontier-Level AI Without a Cloud Bill

The 26B and 31B Gemma 4 models are designed to run on a single NVIDIA H100 GPU. Quantized versions run on consumer-grade hardware. Your team does not need a six-figure cloud compute budget to access this level of capability.

The smaller E2B and E4B models are built for edge and mobile, running offline with near-zero latency. If your product touches Android, IoT, or any on-device use case, this is a direct infrastructure unlock for your engineering team.

The Part Most Companies Will Miss Until It Is Too Late

Gemma 4 supports function calling, structured JSON outputs, 256K token context windows, and more than 140 languages. It handles images, video, audio, and code generation natively across all model sizes.

That is not a chatbot feature set. That is a foundation for building autonomous agents that can manage workflows, interact with your APIs, and make decisions inside your product without human input at every step.

Most companies will spend the next six months reading about it. The ones being built with it now will be done before the others even start.

The Real Risk Is Not Adopting the Wrong Model

Analysts at Gartner caution that open source models like Gemma 4 are not a fit for every use case. Complex financial modeling and regulated workflows may still require proprietary systems with built-in compliance guardrails.

The risk is not picking the wrong model. The risk is having no structured framework to evaluate which AI belongs where inside your business, and making costly integration decisions without that clarity.

An AI Rewrote Its Own Code Overnight and Beat Every Human Engineer. Your Team Is Next.

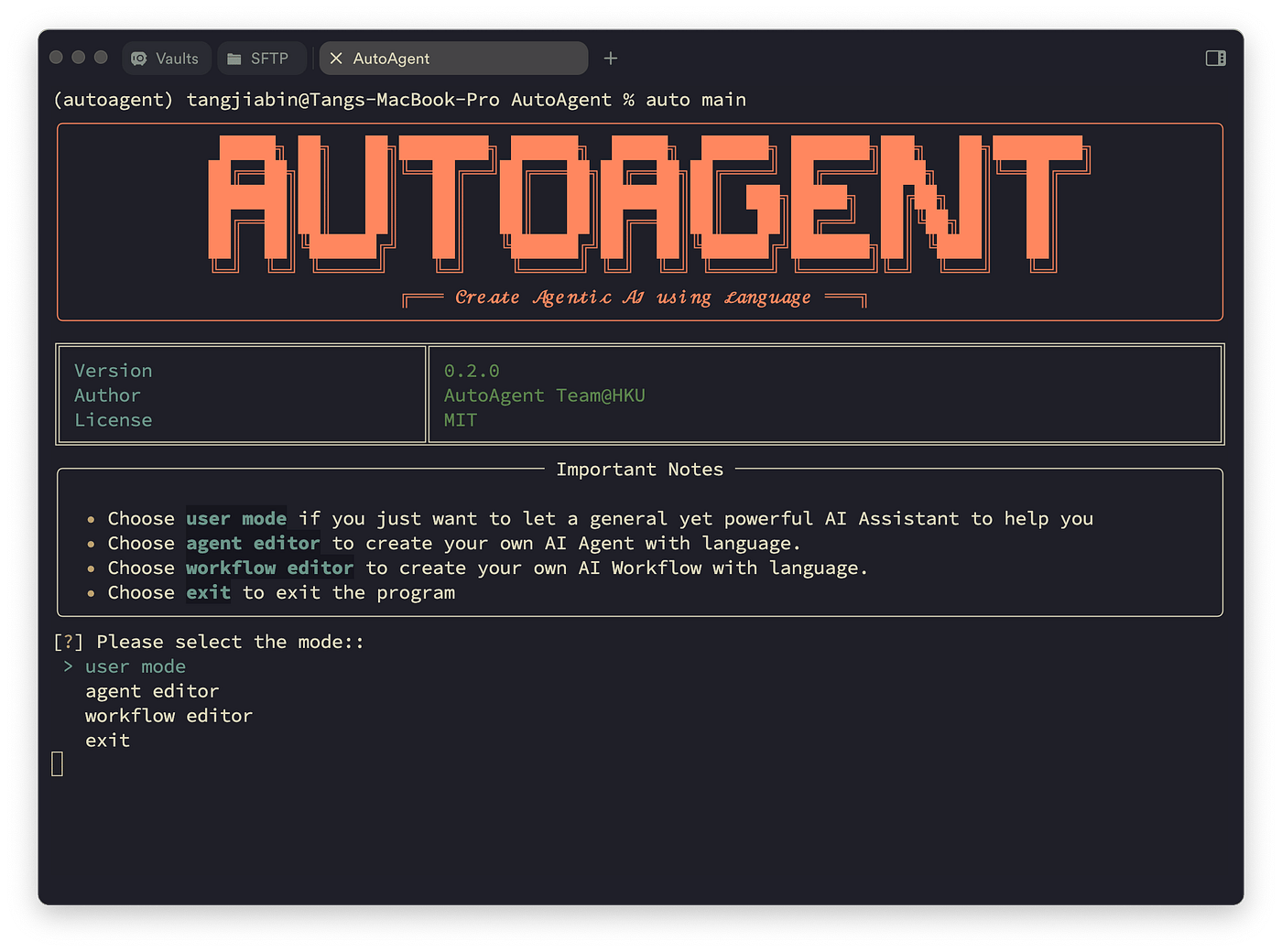

AutoAgent is an open-source library that lets a meta-agent engineer optimize its own agent harness without a single human touching the code. Here is why that changes everything for your AI roadmap right now.

Your Engineers Are Stuck in a Loop That AI Just Escaped

Picture your best AI engineer right now. She is not building new features. She is tweaking a system prompt, running a benchmark, reading failure logs, adjusting a tool definition, and starting over. Again.

This is the prompt-tuning loop. It is one of the most expensive and least visible costs in any company building with AI. And most organizations accept it as the price of doing business with agents.

AutoAgent, built by Kevin Gu at thirdlayer.Inc proves that the assumption is wrong. A meta-agent can take over that entire cycle, run it overnight, and come back with a better-performing harness than your team would have produced in weeks.

What "Self-Optimizing" Actually Means for Your Business

AutoAgent works by separating your role from the machine's role. You write a plain Markdown file that describes what kind of agent you need. The meta-agent handles everything else.

It reads your directive, inspects the agent file, runs the benchmark, checks the score, rewrites the parts that underperformed, and repeats until it cannot improve further. No human touches the agent code directly.

The human's job shifts from engineer to director. You set the goal. The AI figures out how to reach it. That is not a subtle change in workflow. That is a fundamental shift in how AI development works inside your company.

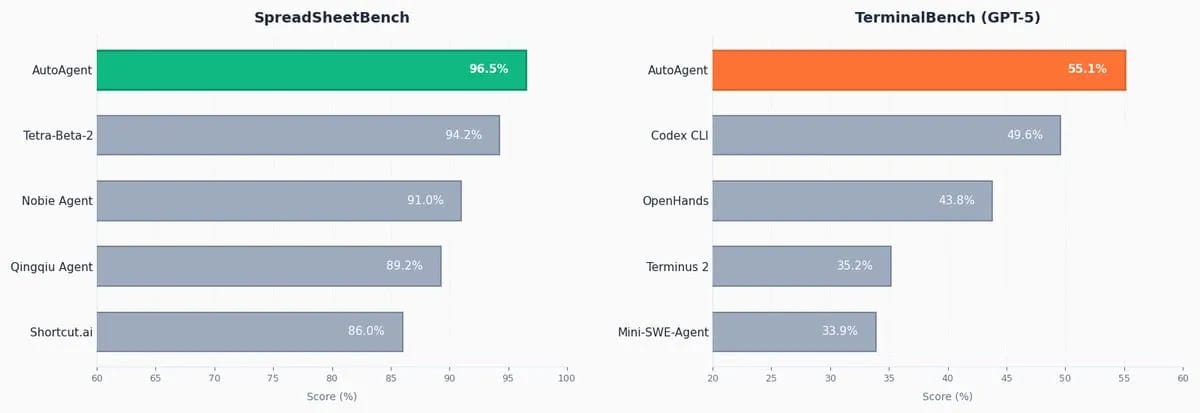

The Part That Should Make Your CTO Pay Attention

The benchmark results are not the story. The architecture is. AutoAgent is domain-agnostic by design. Any task that can be scored, whether that is spreadsheet analysis, terminal operations, code review, or your own custom internal workflow, can become a target for autonomous self-optimization.

Your team does not need to rebuild anything. The tasks run inside Docker containers using an open format. Plug in your own benchmarks and let the meta-agent improve against them overnight, on repeat.

How AutoAgent replaces your prompt-tuning cycle

You write a program.md, a plain Markdown file with your agent directive

The meta-agent reads your goal, inspects the agent configuration, and runs it against your benchmark

Every experiment is logged with a numeric score, kept if better, discarded if not

The loop runs overnight and returns a fully optimized agent harness

One Risk Most Teams Will Not See Coming

AutoAgent surfaces a finding that matters beyond the benchmarks. A Claude meta-agent appeared to diagnose failures more accurately when optimizing a Claude task agent than when optimizing one built on a different model family.

That means the model pairing inside your self-optimizing loop can affect the quality of results. If you build without a clear data and model governance layer, you may run hundreds of overnight experiments and not know why performance varies.

The companies that will use AutoAgent well are the ones that already have visibility into their AI pipeline. The ones that do not will generate noise at scale instead of improvement.

The Shift Has Already Happened. The Question Is Whether You Are Ready for It.

AutoAgent is free, open-source, and running in production environments right now. The teams adopting it are not replacing their engineers. They are redirecting them toward decisions that only humans should be making.

The ones still running the prompt-tuning loop manually are spending the same hours for slower results. That gap will compound quickly over the next six months.

OpenAI Just Raised $122 Billion. Your AI Strategy Is Already Falling Behind.

The enterprise AI race just got a $122 billion accelerant. Here is what it exposes about the gap between companies that are AI-ready and those that only think they are.

$122B, Raised by OpenAI to scale enterprise AI, April 2026

Most Companies Are Deploying AI on Foundations That Will Not Hold

Your company adopted an AI tool last year. Maybe two. Your team is using ChatGPT for work, your developers are on Codex, and leadership is satisfied. Adoption is up. The budget is spent. Boxes are ticked.

But here is the problem: no internal report is surfacing. You are running AI tools on top of data that has never been cleaned, governed, or structured for agent-level workloads.

That gap did not matter much when AI was a productivity add-on. It matters enormously now that OpenAI is explicitly building AI into the operational core of how businesses run, with 9 million paying business users and GPT-5.4 driving record engagement across agentic workflows.

The Signal Hidden Inside the Fundraise

OpenAI says enterprise now makes up more than 40% of its revenue and is on track to match consumer revenue by the end of 2026. That is not a product update. That is a declaration that AI is becoming the operating layer of business itself.

Codex already serves over 2 million weekly users, growing more than 70% month over month. That growth does not come from companies experimenting. It comes from companies that have committed to building with AI at the infrastructure level.

The companies scaling that fast have one thing in common: their data is ready for what the tools demand. Yours may not be.

Why "We Already Have AI Tools" Is the Most Expensive Mistake Right Now

Agentic AI does not forgive messy data. When a GPT-5.4 agent is running workflows autonomously, it pulls from whatever data sits underneath it. If that data is inconsistent, siloed, or ungoverned, the agent makes decisions based on a broken foundation.

Most companies will not discover this until an agent surfaces the wrong output at the wrong moment. By then, the cost is not a subscription fee. It is a rollback, a trust problem, and months of remediation.

Your competitors are building on clean data pipelines right now. Are you?

What AI-Ready Companies Are Actually Doing Differently

They are not buying more tools. They are building the infrastructure that makes every tool they already have work correctly. That means governed data pipelines, unified data visibility, and a clear record of what their AI agents are touching and why.

OpenAI is betting $122 billion that the next phase of AI is enterprise-native and operationally embedded. The companies positioned to benefit are the ones that treated data readiness as a strategic priority before the pressure arrived.

Journey Towards AGI

Research and advisory firm guiding industry and their partners to meaningful, high-ROI change on the journey to Artificial General Intelligence.

Know Your Inference Maximising GenAI impact on performance and Efficiency. | Model Context Protocol Connect AI assistants to all enterprise data sources through a single interface. |

Your opinion matters!

Hope you loved reading our piece of newsletter as much as we had fun writing it.

Share your experience and feedback with us below ‘cause we take your critique very critically.

How's your experience? |

Thank you for reading

-Shen & Towards AGI team