- Towards AGI

- Posts

- AI Feels Cheap Right Now. That’s the Most Misleading Signal

AI Feels Cheap Right Now. That’s the Most Misleading Signal

Falling token prices hide a deeper reality

Today, we’re diving into:

Hot Tea: Why today’s cheap AI won’t stay that way

AI news: How datacenter strikes are rewriting the rules of digital resilience

OpenAI: AI is reshaping distribution, advice, and operational models

Closed AI: AI OS platforms are becoming the backbone of enterprise intelligence

The Subsidy Phase of AI

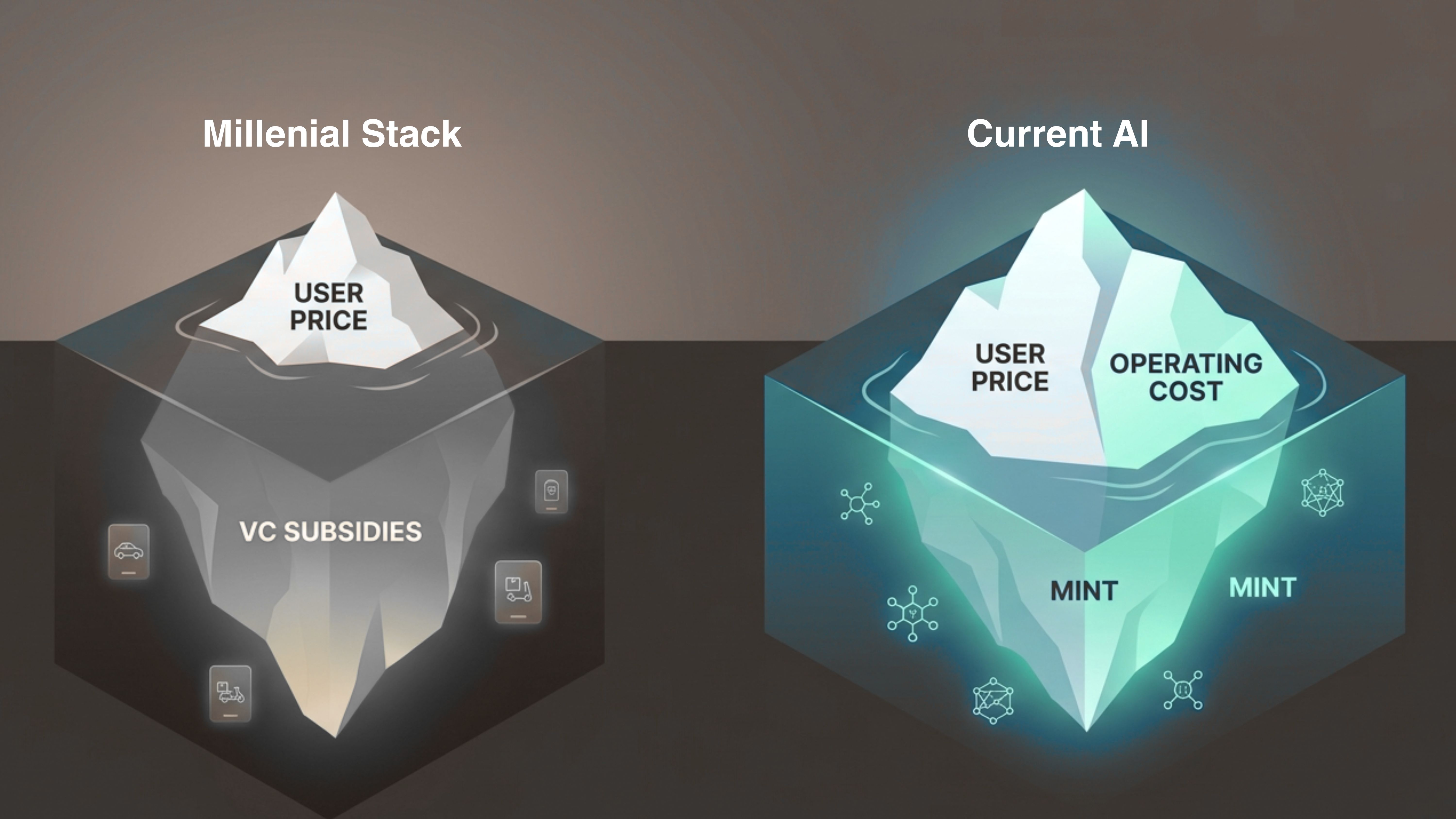

The easiest way to understand today’s AI pricing is to compare it to earlier Silicon Valley growth cycles.

For years, venture capital subsidized what eventually became known as the “millennial lifestyle stack,” where companies like Uber and DoorDash offered rides and deliveries at prices that did not reflect their real operating costs.

Even earlier, Amazon built its early dominance on extremely low prices, free shipping, and years of prioritizing market share over profits.

These strategies were not sustainable business models in isolation; they were deliberate growth tactics designed to lock in users before the companies eventually moved toward profitability.

A similar dynamic is beginning to appear across the artificial intelligence industry. Over the past two years, models from companies such as OpenAI, Google, and Anthropic have become faster, more capable, and in many cases cheaper to use.

Aggregate token pricing, the cost associated with generating AI responses, has dropped significantly due to improvements in model efficiency and infrastructure optimization.

However, these falling prices mask a deeper economic tension. Even as the cost per query declines, the companies building and operating these models are still spending enormous amounts of capital to keep the systems running.

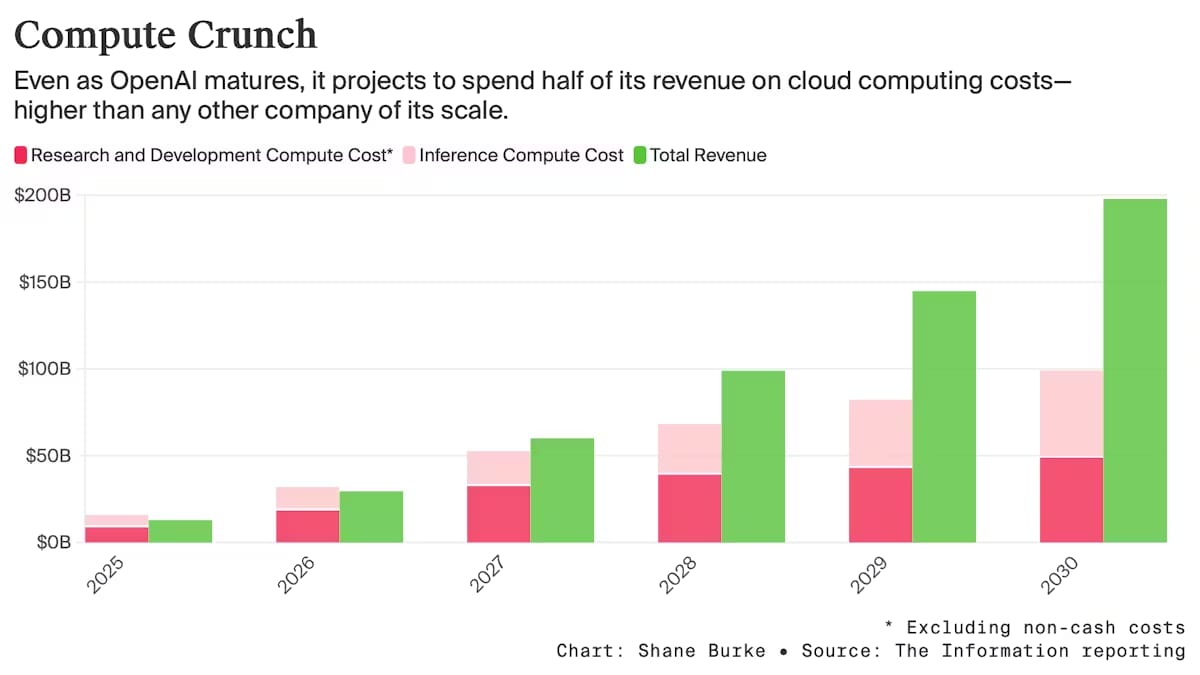

Financial projections illustrate this imbalance clearly.

OpenAI is expected to burn roughly $14 billion in 2026, up from about $8 to $9 billion in 2025, according to industry estimates.

Although some competitors have improved their financial performance, the overall economics of the sector remain fragile.

For example, Anthropic has improved its margins dramatically, moving from roughly -94% in 2024 to about +40% in 2025, yet its profitability remains sensitive to inference costs, the computing required every time a model generates an answer.

In simple terms, every complex query consumes GPU resources that must be paid for in real time, meaning the provider absorbs the cost whenever the price charged to users falls below the true infrastructure expense.

The infrastructure side of the industry is also evolving quickly as companies recognize where the real demand lies.

Early discussions around AI focused almost entirely on training large models, but the focus has increasingly shifted toward inference, the process that allows models to respond to millions of users simultaneously.

Hardware providers such as Nvidia are now prioritizing chips designed specifically to accelerate inference workloads, with new hardware expected to be unveiled at upcoming developer conferences.

These advances improve efficiency and reduce the cost per generated token, yet they also enable far greater usage, which in turn increases the overall demand for compute.

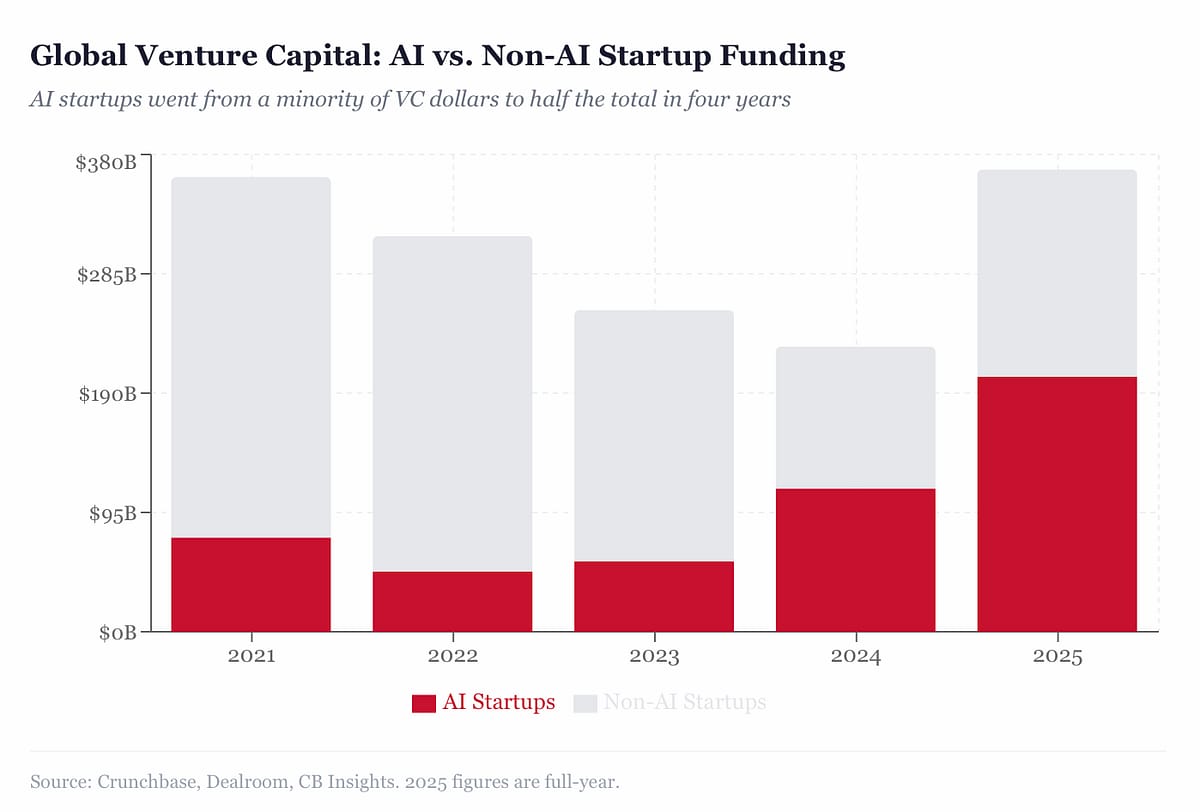

Another factor distorting the economics is the competitive race among AI labs to capture market share before moving toward public markets.

Venture capital continues to pour enormous sums into the sector, with Crunchbase data indicating that about 90% of venture funding in February went to AI startups, and two companies, OpenAI and Anthropic, captured roughly 74% of that capital.

These financial inflows allow providers to sustain aggressive pricing strategies that may not fully reflect the long-term cost of delivering large-scale AI services.

If your product, workflow, or startup depends heavily on large volumes of tokens, the safest strategy is to design for efficiency now rather than waiting for prices to rise later.

That means optimizing your prompts, reducing unnecessary inference calls, caching outputs where possible, and using smaller or specialized models when full-scale reasoning models are not required.

More importantly, you should focus on using AI to generate revenue rather than simply reduce costs or automate tasks.

In a market where subsidies are gradually fading, your advantage will come from designing for sustainable AI economics rather than assuming today’s cheapest phase of the technology will last forever.

Datacenters Are Now Part of the Battlefield

If you still think of datacenters as neutral infrastructure, recent events should change that assumption.

During the escalating conflict involving Iran and regional powers aligned with the US and Israel, Iranian Shahed-136 drones reportedly struck multiple commercial facilities operated by Amazon Web Services in the UAE and near Bahrain.

Iranian state media claimed the strike was intended to examine the role these facilities might play in supporting military or intelligence operations tied to Western alliances.

Regardless of the precise military objective, the immediate effect was clear: millions of residents in Dubai and Abu Dhabi suddenly found themselves unable to perform routine digital actions like paying for taxis, ordering food delivery, or checking banking apps.

If your company relies on cloud platforms or global infrastructure, you are building on systems that now sit inside geopolitical fault lines.

The AWS facilities that were hit were not military bases; they were commercial datacenters supporting everyday digital activity. Yet the disruption quickly rippled through payments, mobility apps, and financial services used by millions of people.

That should change how you think about infrastructure planning. When a single strike on a datacenter can interrupt daily economic activity for an entire region, resilience stops being an engineering detail and becomes part of your strategic architecture.

You should also understand why these facilities are increasingly attractive targets. Modern datacenters are among the most expensive and strategically important buildings in the world.

They host cloud platforms, financial systems, logistics networks, and increasingly the massive GPU clusters required to run AI systems.

Companies like OpenAI and Anthropic rely on enormous compute infrastructure, often powered by hardware from Nvidia, to operate the inference workloads that serve millions of users.

If your products depend on those platforms, your software ultimately runs inside facilities that require billions of dollars to build and years to replace.

That combination of economic value, technical concentration, and geopolitical symbolism makes them natural strategic targets during conflicts.

You should also consider the second layer of this shift: warfare itself is becoming increasingly dependent on AI.

Reports from recent conflicts indicate that AI systems are already helping identify targets, prioritize intelligence signals, recommend weapons systems, and evaluate legal parameters for potential strikes.

When military systems rely on AI infrastructure, the datacenters hosting those systems inevitably become part of the strategic landscape.

So, if your organization is building AI-driven products, you are indirectly participating in that same infrastructure ecosystem.

Thus, your infrastructure strategy can no longer focus only on latency, cost, and scalability. You need to think about redundancy across regions, multi-cloud deployments, and the ability to move workloads quickly if a specific geography becomes unstable.

Your architecture should assume that disruptions, whether caused by conflict, political pressure, or infrastructure failure are possible.

The organizations that adapt to this reality will treat digital infrastructure the same way global companies eventually learned to treat supply chains: as systems that must be resilient to geopolitical shocks.

AI’s Wake-Up Call for Insurance and Wealth Management

AI is already reshaping how value flows through the sector.

If you are running operations or advising clients today, you need to understand that efficiency gains are no longer enough.

Some tools have exposed how quickly AI can disintermediate traditional advisory and brokerage models.

Investors are also already responding, as share-price declines highlights the fragility of incumbents that fail to embrace agentic systems capable of automating multistep processes.

For you, this is a signal that reliance on manual workflows, siloed data, or outdated technology stacks could directly erode market share before you even realize it.

AI can now handle underwriting, claims processing, and portfolio analysis with minimal human intervention, compressing turnaround times from days or weeks to hours.

Thus, you are facing a future where your competitors can provide same-day policy quotes, generate fully personalized wealth strategies, and maintain compliance across multiple jurisdictions with near-zero errors.

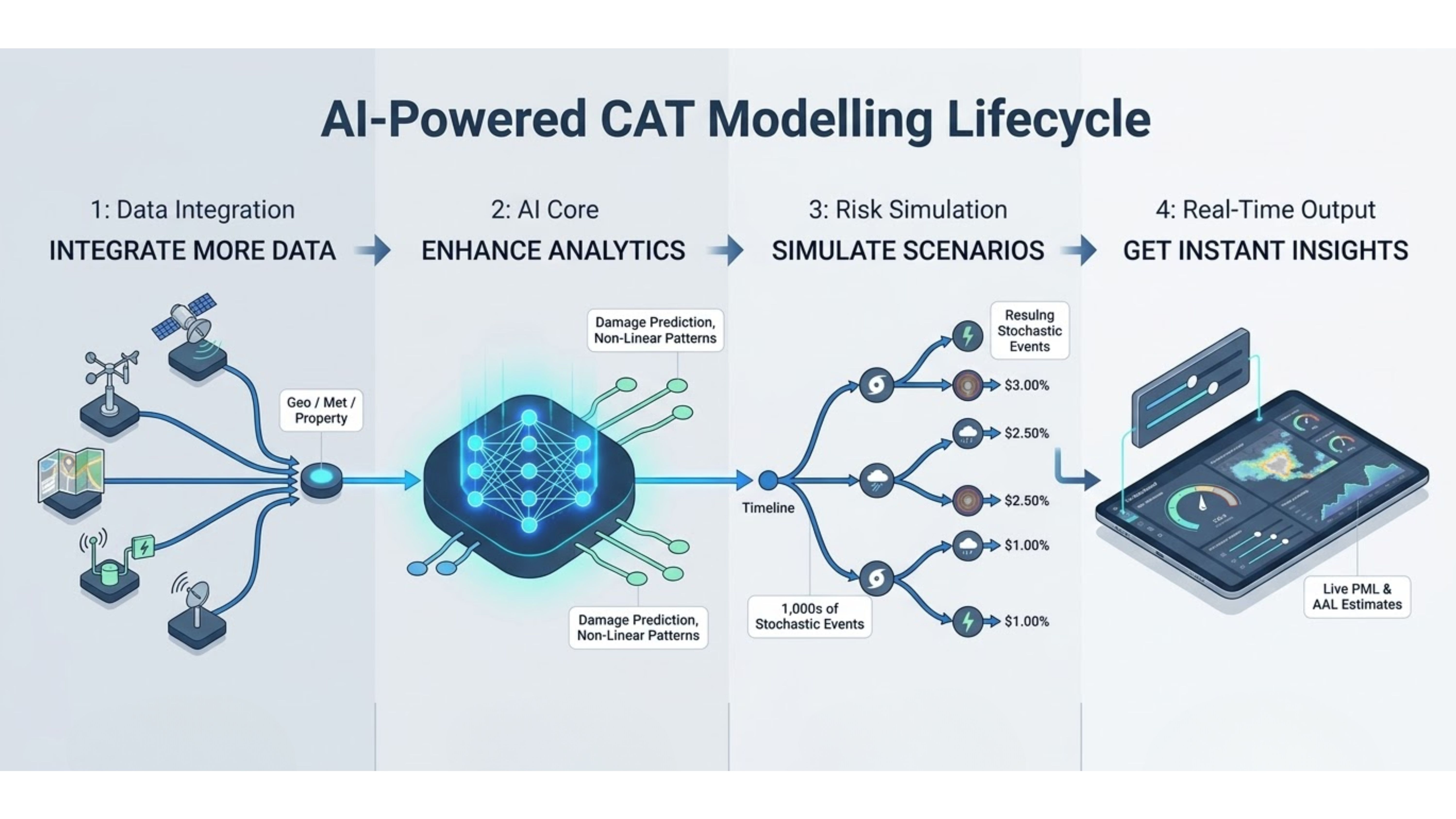

If you are still relying on legacy systems or partial automation, you risk being outpaced in both cost efficiency and client experience. Every manual bottleneck, whether it’s slow treaty analysis, overloaded underwriting pipelines, or delayed CAT modeling is a vulnerability in your ability to compete.

This is where Reinsured.AI can transform your operations.

For your reinsurance workflows, specialized AI agents can tackle complex processes with speed and precision: reconciliations that previously took weeks now happen in hours; treaty analysis accelerates by 60%; catastrophe pricing and quote generation become same-day capabilities.

You can also process three times more submissions, optimize retrocession procurement, and monitor regulatory compliance in real time, all while reducing operational costs by up to 40%.

For your business, this doesn’t just improve efficiency; it allows you to scale without adding headcount, capture opportunities that were previously missed, and reinvest saved resources into client-facing or strategic initiatives.

By embedding AI directly into core operations from bordereaux processing to CAT pricing you will effectively reposition your company to compete on speed, accuracy, and operational excellence.

So, you need to rethink your business model around these capabilities, deciding which processes can be fully automated, which require human oversight, and how AI can expand the scope and scale of your service delivery.

Those who act now will not only reduce operating costs and increase throughput, but also set themselves apart in a market that will soon demand both speed and intelligence as the baseline.

The era of incremental improvement is over; your strategy must focus on leveraging AI to reinvent distribution, advice, and operational performance before competitors capture the advantage.

The Rise of AI Operating Systems: Your Next Strategic Imperative

AI is no longer just a tool, it’s becoming the backbone of how enterprises operate.

Platforms like Palantir’s newly announced AI operating system reference architecture illustrate the shift: end-to-end AI infrastructure now spans hardware, software, orchestration, and application deployment.

If your organization relies on AI for insights, decision-making, or operational automation, you need to understand the mechanics.

Systems like Palantir’s leverage NVIDIA Blackwell Ultra GPUs, Kubernetes-based orchestration, and integrated model-to-data connectivity, ensuring low-latency, scalable AI operations while maintaining strict control over data sovereignty.

For you, this isn’t just an infrastructure upgrade, it’s about controlling the speed, reliability, and legal compliance of every AI-powered workflow in your enterprise.

You need to ask yourself:

1) How quickly can your teams deploy new AI models?

2) How resilient are your workflows to spikes in demand or hardware constraints?

3) And how much of your AI stack can you truly trust to scale without constant human intervention?

Modern AI operating systems are designed to handle these challenges, unifying compute, networking, model orchestration, and governance.

They allow your AI initiatives to move from experimental pilots to mission-critical deployments, reduce latency, and minimize operational friction, but only if you’re intentional about architecture and execution.

For organizations navigating this transformation, success comes from not just adopting AI, but orchestrating it strategically. This is where the right guidance matters.

Executives and technology leaders are increasingly turning to structured training and coaching programs to bridge the gap between AI potential and business impact.

Programs like those offered by TowardsAGI help you translate complex AI architectures into actionable strategy, refine workflows for agentic systems, and ensure teams can deploy AI confidently across functions from operations to product and data engineering.

Through 1:1 coaching, scenario-based simulations, and team upskilling, you gain the skills to evaluate AI operating systems, understand the implications of agentic models, and lead AI-driven transformations confidently.

AI operating systems are no longer optional, they’re the backbone of modern enterprise strategy.

As we’ve seen with advanced architectures, the ability to unify compute, data, orchestration, and governance directly impacts speed, reliability, and scalability.

For your organization, this means moving beyond pilot projects and embedding AI as a core operational layer that drives decision-making and execution.

The companies that master AI OS frameworks will not only reduce friction and latency but also gain the agility to deploy new models rapidly, scale intelligently, and respond to changing market demands.

Journey Towards AGI

Research and advisory firm guiding on the journey to Artificial General Intelligence

Know Your Inference Maximising GenAI impact on performance and Efficiency. | Model Context Protocol Connect with us, and get end-to-end guidance on AI implementation. |

Your opinion matters!

Hope you loved reading our piece of newsletter as much as we had fun writing it.

Share your experience and feedback with us below ‘cause we take your critique very critically.

How's your experience? |

Thank you for reading

-Shen & Towards AGI team