- Towards AGI

- Posts

- 3 Key Areas Where AGI Still Falls Short

3 Key Areas Where AGI Still Falls Short

why we’re years away from true human-level intelligence

Today, we’re diving into:

Hot Tea: AGI is still evolving

Open AI: Autonomous AI adoption is accelerating

Open AI: Shoppers hold brands accountable when AI fails

Closed AI: Major CEOs are stepping down, citing AI as a key driver

Stop Believing the Hype

Demis Hassabis, CEO of Google DeepMind, was asked a pointed question: Can today’s AGI systems truly match human intelligence? His answer was simple: “I don’t think we are there yet.”

AGI refers to systems capable of reasoning across domains, solving problems without preprogrammed instructions, and adapting dynamically to new situations.

Despite the hype, even the most advanced AGI prototypes, like DeepMind’s Gemini, have 3 technical limitations that you need to understand if you want to stay ahead in AI strategy.

1. Continual Learning

Current AGI systems rely on offline training. They ingest massive datasets, learn patterns, and are then deployed in a static state. This means they can’t update their internal representations in real time based on new experiences.

For example, if an AGI encounters a subtle behavioral shift in a user’s preferences, it can’t automatically incorporate that context without retraining. Humans, by contrast, continuously integrate new information from their environment.

This gap limits AGI in applications requiring personalization, dynamic context awareness, and adaptive problem solving in autonomous operations, financial modeling, or real-time healthcare diagnostics.

2. Long-Term Planning

AGI excels in tasks with immediate or short-term objectives, but struggles with strategic planning over extended time frames. While these systems can optimize a plan for the next few steps, they lack hierarchical goal representations that humans naturally construct.

Imagine asking an AGI to manage a multi-year supply chain optimization project. Today’s systems can generate immediate scheduling solutions but cannot anticipate compounding risks, shifting market dynamics, or cascading dependencies over months or years.

Humans excel here because we can encode long-term constraints, simulate multiple future scenarios, and make trade-offs. Current AGI lacks this deep temporal reasoning.

3. Inconsistent Expertise

Even when an AGI is superhuman in certain domains, it can fail spectacularly in others. Hassabis cites a striking example: a system capable of solving International Math Olympiad problems yet occasionally faltering on elementary arithmetic under unusual phrasing.

This jaggedness arises because AGI models generalize across learned patterns but are not inherently robust to distributional shifts.

Unlike human experts, who integrate conceptual understanding across contexts, AGI is highly sensitive to training biases, prompting anomalies, and context variations.

Until these gaps are addressed, AGI will remain a hybrid of specialized superhuman capabilities and human-level weaknesses. This isn’t a theoretical warning; it has immediate business implications.

Thus you shouldn’t rely on current AGI for critical decisions without human oversight as it can introduce subtle errors, operational risk, and strategic blind spots.

Autonomous AI Is Here. Are You Ready to Trust It?

Autonomous AI is no longer a speculative concept. According to EY’s 2026 AI Sentiment Report, 16% of people across 23 global markets have already used AI systems that act independently in the past six months.

Meanwhile, 84% of respondents have engaged with AI in some capacity, signaling a rapid transition from assistance to autonomy.

For businesses, this is both an opportunity and a wake-up call.

Autonomy in AI isn’t just about convenience; it fundamentally changes how decisions are made and executed. Self-driving vehicles, AI-managed shopping carts, autonomous travel bookings, and automated banking interactions are already mainstream experiments.

In markets like India, China, Brazil, and South Korea, the so-called “Pioneer” markets, 24% of users have embraced full autonomy, reflecting a clear trajectory for global AI adoption.

But adoption outpaces trust. Two-thirds of respondents worry about hacking and breaches, nearly 70% insist on human oversight, and 73% fear they can’t distinguish AI-generated content from reality.

This is where the technical infrastructure behind autonomous AI becomes critical. It’s not enough to deploy agentic systems; organizations must build data pipelines, monitoring frameworks, and accountability layers that ensure AI decisions are reliable and auditable.

One practical step for firms is leveraging structured data platforms like DataManagement.AI to ensure the AI’s autonomy is backed by verified, accurate, and lineage-tracked datasets.

This doesn’t just prevent errors, it provides a trust layer. By continuously validating input data, monitoring agentic decision logs, and implementing robust access controls, businesses can allow AI to act independently while maintaining governance.

The technical demands of autonomous AI are nontrivial. Agents need real-time data ingestion, contextual reasoning, and dynamic prioritization of tasks to function safely in diverse environments.

For example, an AI agent autonomously managing a supply chain must reconcile inventory data, vendor schedules, and delivery constraints while adapting to unforeseen disruptions. Without clean, validated data driving these decisions, the risk of cascading failures skyrockets.

The EY report also reveals a nuanced adoption pattern: markets that normalize AI in low-stakes daily activities like route planning, content recommendations, travel scheduling create a foundation for higher-stakes delegation.

Users begin to trust AI where experience has been predictable, and that trust is reinforced when outcomes align with expectations. That’s why embedding continuous validation and transparency at every layer of your AI stack is not optional, it’s a competitive advantage.

Early movers can leverage autonomy to reduce operational friction, personalize at scale, and unlock entirely new business models.

58% of Shoppers Are Blaming Brands For AI’s Mess Up

Recent research by Rithum and Studio’s Retail Dive found that 58% of shoppers blame the brand when AI tools provide incorrect information.

This is a wake-up call for business leaders: as AI becomes a trusted “advisor” in shopping journeys, your brand’s reputation is only as strong as the data driving these recommendations.

The survey of over 1,000 US and UK consumers highlights a critical trend.

Over 80% of shoppers under 44 have used AI-assisted tools in the last three months, relying on large language models (LLMs) like ChatGPT and Gemini to explore products, compare options, and even narrow purchase decisions.

Notably, high-income households use AI more frequently, leveraging it to reduce time spent browsing and increase confidence in their choices. Yet, the same AI systems that guide purchases are only as reliable as the underlying product data.

Thus, missteps in pricing, availability, or specifications directly erode consumer trust.

Price accuracy is the most sensitive metric. 67% of consumers rank it as their top concern in AI recommendations, followed by reviews (35%) and availability (34%). Yet, 44% report encountering inaccuracies, with 16% abandoning the purchase entirely. For brands, these aren’t just statistics, they’re lost revenue and fractured loyalty.

From a technical POV, the root of the problem is fragmented data pipelines.

Many organizations rely on multiple databases, delayed batch updates, and manual reconciliations which are perfect conditions for AI to misrepresent product information.

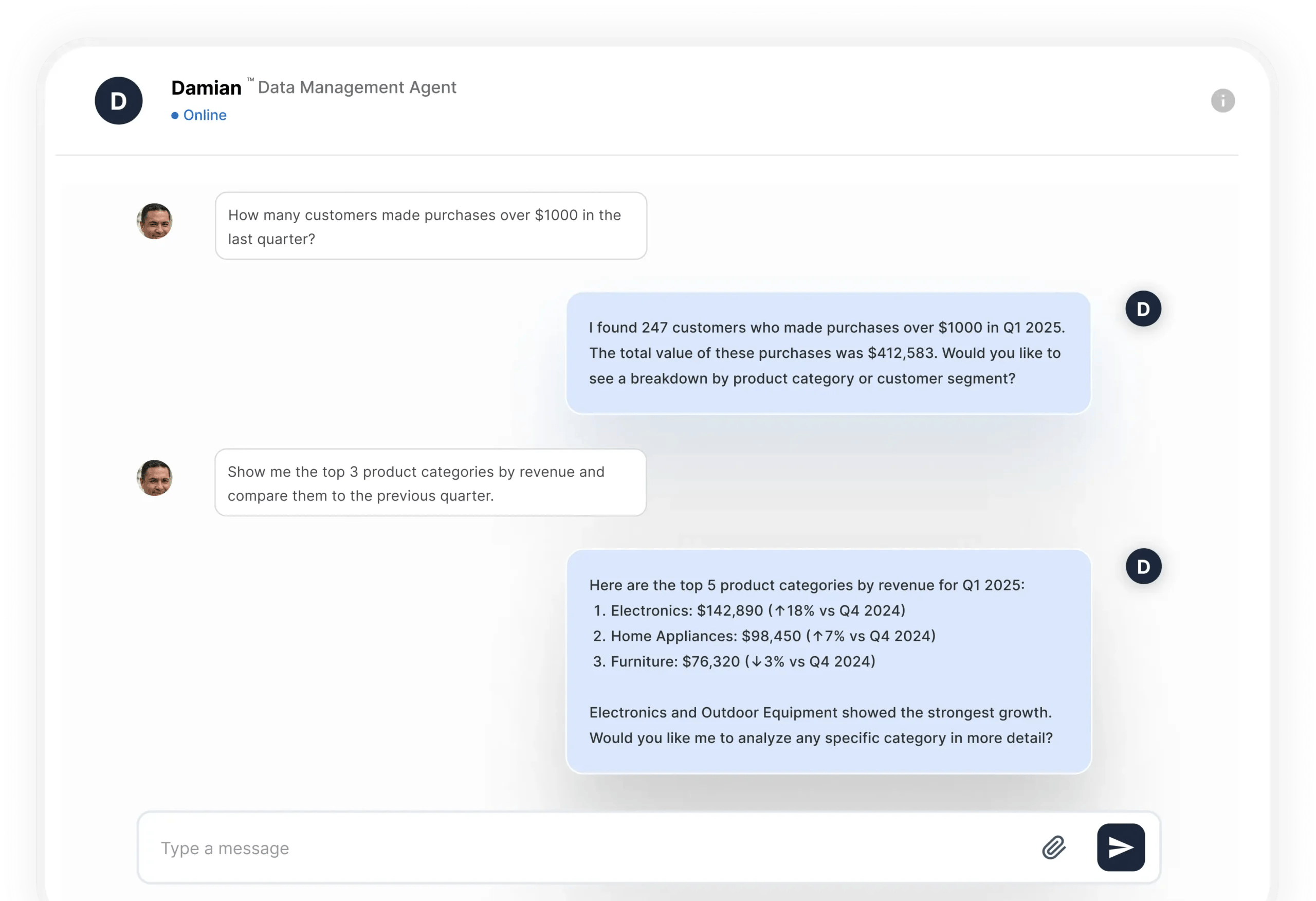

This is where solutions like DataManagement.AI’s Damian chatbot can be a game-changer. By enabling instant data access, it bypasses months of ETL and prep work, querying and analyzing data directly in its source system.

Beyond speed, Damian ensures in-place interaction with data, leaving it in its original system while maintaining full control and governance. This reduces inconsistencies that could otherwise propagate to AI-powered shopping agents.

By securing the pipeline with always-on compliance and authentication, brands can confidently deploy agentic AI workflows for customer service, sales, and personalized recommendations without worrying about accuracy gaps.

AI-driven commerce also demands architectural flexibility. Damian’s approach supports models without borders, letting companies own the AI roadmap across clouds and platforms.

Whether integrating pre-built agents for retail personalization or bespoke workflows for high-touch products, brands retain full control over data, governance, and model choice which is key to preserving trust in automated recommendations.

Why Is AI Prompting Top CEOs to Step Aside?

AI isn’t just reshaping businesses, it’s reshaping leadership itself. In recent months, two major outgoing CEOs, James Quincey of Coca-Cola and Doug McMillon of Walmart, cited AI as a pivotal factor in their decisions to step down.

Their departures offer a revealing window into how corporate leaders are calibrating strategy, talent, and transformation in the AI era.

Quincey, who has led Coca-Cola since 2017, explained that the company is entering a “huge new shift” driven by gen AI and the automation of strategic processes. While the company had achieved significant growth in the pre-AI era, Quincey believes the next chapter demands a leader capable of harnessing AI to drive enterprise-wide transformation.

His successor, COO Henrique Braun, is expected to lead this next wave, navigating data-driven consumer engagement, AI-optimized supply chains, and advanced predictive modeling.

Similarly, Walmart’s McMillon emphasized that AI is accelerating the pace and complexity of organizational transformation. In his view, stepping aside allows the next CEO to take full ownership of “agentic commerce” and AI-enabled retail innovation.

Walmart has already integrated AI to optimize inventory, forecast demand, and deliver personalized customer experiences. McMillon noted that while he could start the AI-led transformation, completing it would require “someone faster,” reflecting a critical reality: AI adoption compresses strategic timelines and raises expectations for agility.

The rise of AI also introduces structural and operational shifts that directly affect leadership competencies. AI-powered predictive analytics, large language models for customer engagement, and autonomous operational tools demand not only strategic vision but also deep familiarity with data governance, model risk, and real-time operational pipelines.

This is where TowardsAGI play a critical role by helping executives distill which AI initiatives truly impact business outcomes, implement agentic workflows efficiently, and bridge the gap between strategy and execution.

Leaders without the bandwidth or appetite for these transformations may recognize that passing the baton is the most responsible course, while those leveraging tools like TowardsAGI can accelerate adoption with confidence and clarity.

So, AI is not merely an operational tool, it’s a strategic accelerant.

The decisions of Quincey and McMillon reflect a recognition that leadership in the AI era demands agility, technical fluency, and comfort with autonomous, data-driven systems.

As AI adoption grows, boards and executives must consider not just what AI can do for the company, but who is best equipped to lead the company through this unprecedented transformation.

Journey Towards AGI

Research and advisory firm guiding on the journey to Artificial General Intelligence

Know Your Inference Maximising GenAI impact on performance and Efficiency. | Model Context Protocol Connect with us, and get end-to-end guidance on AI implementation. |

Your opinion matters!

Hope you loved reading our piece of newsletter as much as we had fun writing it.

Share your experience and feedback with us below ‘cause we take your critique very critically.

How's your experience? |

Thank you for reading

-Shen & Towards AGI team